Trump-appointed judges refuse to block Trump blacklisting of Anthropic AI tech

Appeals court denies Anthropic's emergency motion for a stay.

Signal weather

Stable

The story has moved beyond the first headline and now acts as a reliable context anchor.

A federal appeals court refused to halt the Trump administration's efforts to blacklist Anthropic yesterday, denying the company's emergency motion for a stay. But the court granted the US-based AI firm's request to expedite the case and will hold oral arguments on May 19. The ruling by the US Court of Appeals for the District of Columbia Circuit was issued by a panel of three judges appointed by Republicans, including Trump appointees Gregory Katsas and Neomi Rao. Katsas previously served as deputy counsel to the president during Trump's first term, while Rao served in the Trump administration's Office of Management and Budget. The judges' decision is a setback for Anthropic, but it's only one of two cases it filed against the Trump administration, and the AI firm has had more success in the other one. Anthropic says it exercised its First Amendment rights by refusing to let Claude AI models be used for autonomous warfare and mass surveillance of Americans, and that Trump and Defense Secretary Pete Hegseth blacklisted it in retaliation. Trump directed all federal agencies to stop using Anthropic technology, and Hegseth labeled Anthropic a "Supply-Chain Risk to National Security," prohibiting military contractors from doing business with Anthropic. Read full article Comments

Stay on the signal

Follow Trump-appointed judges refuse to block Trump blacklisting of Anthropic AI tech

Follow this story beyond a single article: new follow-ups, adjacent sources, and the evolving storyline.

Story map

Understand this topic fast

A quick entry into the story: why it matters now, who is involved, and where to go next for context.

Why it matters now

Topic constellation

Open the live map for this story

See which entities, story threads, sources, and follow-up articles shape this story right now.

Click nodes to continue

Entity pages

Story timeline

Continue with this story

A short sequence of events and follow-up stories to understand the arc quickly.

How reliable this looks

Signal and trust for Ars Technica

This source works at a rapid pace: 100% of recent stories land in the hot window, and 0% carry visible search signal.

Reliability

92

Freshness

100

Sources in storyline

2

Related articles

More stories that share tags, source, or category context.

Forecasters predict below-average hurricane season, advise against complacency

Forecasters say expected El Niño should temper hurricanes in Atlantic, urge preparedness.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

ИИ больше не напишет код с дырами (по крайней мере, попытается). Anthropic выпустила плагин безопасности для Claude Code

Сможет ли алгоритм заменить критический взгляд строгого тимлида?

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

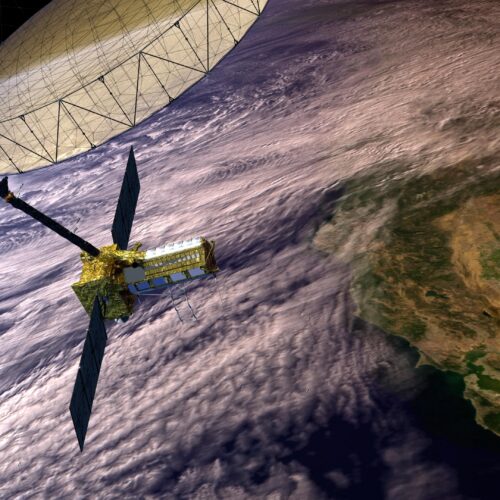

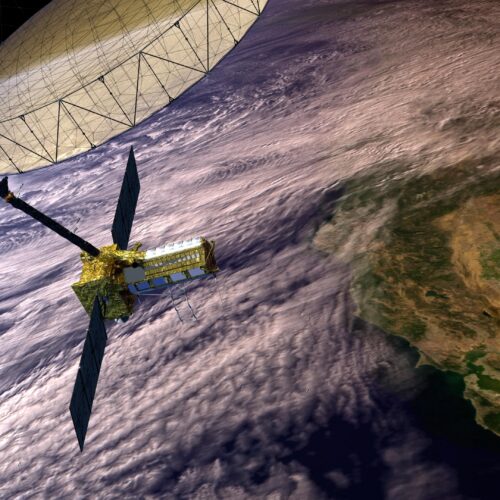

Mystery GPS jammer in Iran becomes test for NASA satellites’ capabilities

NASA science satellites show dual use in locating sources of GPS interference.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

Mina the Hollower is the best old-school action adventure I've played in a while

Smooth movement, compelling combat, and tons of secrets make for an innovative throwback.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

More from Ars Technica

Fresh reporting and follow-up coverage from the same newsroom.

Forecasters predict below-average hurricane season, advise against complacency

Forecasters say expected El Niño should temper hurricanes in Atlantic, urge preparedness.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

California defeats Tesla's attempt to throw out racial discrimination lawsuit

California civil rights agency hails win over Tesla, anticipates trial in July.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

Websites have a new way to spy on visitors: analyzing their SSD activity

Telltale SSD activity can be measured in the browser using simple JavaScript.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.

Mystery GPS jammer in Iran becomes test for NASA satellites’ capabilities

NASA science satellites show dual use in locating sources of GPS interference.

Signal weather

Momentum is building quickly, so this card is a good early entry point into the topic.

Why now

Fresh coverage with immediate momentum.